Preemption as a Moral Duty

If AI Predicts Attack with 85% Confidence, Must We Strike First?

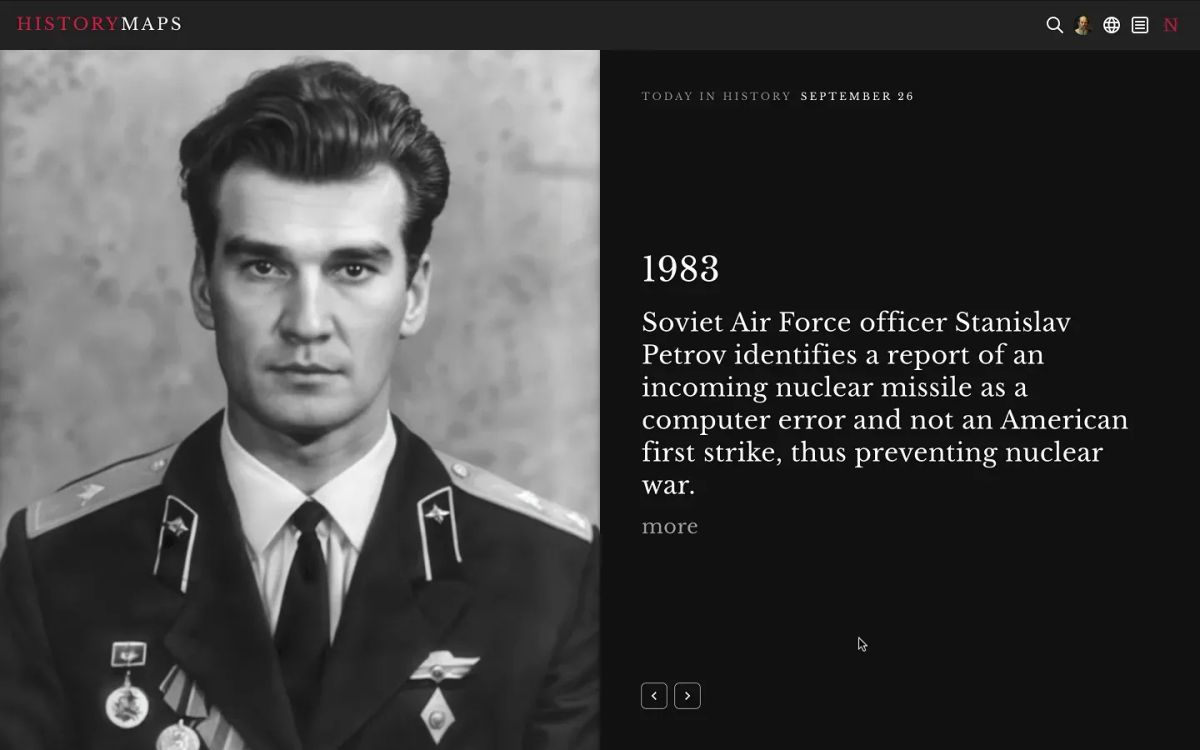

In 1983, a Soviet lieutenant colonel named Stanislav Petrov sat in a bunker watching a screen flash "MISSILE LAUNCH." The system told him the United States had fired five nuclear warheads. Petrov's training said to report the strike immediately, which would have triggered a retaliatory launch. He did not. He judged the system was wrong. It was.

Petrov was human. He could weigh doubt, context, intuition. He could decide that the absence of corroborating evidence mattered more than the certainty of the algorithm. The question that now confronts us—forty years later, in a world where AI systems are being integrated into military targeting, threat assessment, and strike authorization at unprecedented speed—is what an AI system would have done in Petrov's place. Not whether it could have processed the data faster. Whether it would have had the capacity to hesitate.

This is not a thought experiment. It is the operational reality toward which multiple militaries are now moving, each for their own reasons, each under their own pressures. And the doctrine that makes it most dangerous is not new. It is the ancient logic of preemption—the idea that if you can predict an attack, you may be obligated to strike first. What is new is the claim that AI can provide the prediction.

The Architecture of Anticipation

The military rationale for a preemptive strike has always rested on prediction: the belief that an adversary is about to act and that waiting would be catastrophic. During the Cold War, this logic was held in check by mutually assured destruction—the certainty that any first strike would be met with annihilation. Deterrence worked, imperfectly, because both sides understood the costs.

What AI introduces is a different kind of confidence. Not the existential certainty of nuclear annihilation, but the statistical confidence of probabilistic prediction. A system that ingests satellite imagery, signals intelligence, communications intercepts, logistics data, and historical patterns and outputs a number: 85% likelihood of imminent attack.

The Pentagon's FY2026 budget request includes a record $14.2 billion for AI and autonomous research. The Replicator initiative, launched in 2023 to field thousands of autonomous drones, has been reorganized under a newly created Defense Autonomous Warfare Group within Special Operations Command. In January 2026, the Pentagon awarded its first contracts for AI-powered interceptor drones under Replicator's second phase. The stated purpose is deterrence in the Indo-Pacific. The unstated trajectory is toward systems that can detect, decide, and act faster than any human chain of command.

The logic driving this investment is not abstract. A December 2025 white paper from the Harvard Kennedy School's Belfer Center observed that states have already begun incorporating AI systems into their military postures and decision-making processes. Global military spending on AI doubled from $4.6 billion to $9.2 billion between 2022 and 2023, and is projected to reach $38.8 billion by 2028. Paul Scharre, a former Army Ranger who wrote the Pentagon's first policy on autonomous weapons, has warned that we may be approaching a "battlefield singularity"—a point where the speed of combat outpaces human cognition entirely.

In this environment, the question is not whether AI will be used to predict threats. It already is. The question is what happens when a prediction becomes an instruction.

The Precedent That Already Exists

Human review of Lavender's recommendations averaged approximately 20 seconds per target, often limited to confirming the target was male.

We do not need to speculate about what AI-assisted preemptive targeting looks like. We have a case study.

In its military campaign in Gaza, the Israel Defense Forces deployed a system known as Lavender—an AI-powered database that used machine learning to assign residents of Gaza a numerical score indicating the suspected likelihood of affiliation with an armed group. According to testimony from six Israeli intelligence officers published by +972 Magazine, Lavender at its peak generated a list of 37,000 Palestinian men as potential human targets. The system was used alongside Gospel, which identified buildings and structures for strikes, and "Where's Daddy?", which tracked when a designated target was at home.

The operational logic was precisely preemptive: identify potential threats before they materialize into attacks, and neutralize them. Officers reportedly accepted a 10% error rate—meaning roughly one in ten people flagged by the system may have had no connection to any armed group. Human review of Lavender's recommendations averaged approximately 20 seconds per target, often limited to confirming the target was male. Junior operatives were struck with unguided munitions at their homes, at night, when their families were present.

A classified Israeli military database reviewed in 2025 indicated that only 17% of those killed were combatants.

Whatever one's position on the broader conflict, the structural lesson is clear: when an AI system produces a confidence score and a military institution is under pressure to act, the human "in the loop" can become a formality. The machine generates the prediction. The institution treats the prediction as authorization. The 20-second review becomes the moral architecture of preemption.

This is not a failure of technology. Lavender reportedly performed within its designed parameters. It is a failure of institutional design—of the frameworks meant to govern how predictions translate into lethal action.

The Compression of Decision Time

The Lavender case involved targeting individuals. The more consequential version of this problem operates at the strategic level, where AI-driven threat assessment could compress the time between prediction and preemptive strike to minutes or seconds.

AI-enabled autonomous navigation has increased strike success rates from 10-20% to 70-80% by removing reliance on human control and vulnerable communications links.

Consider the dynamics now playing out across multiple theaters. Ukraine has become the world's first large-scale laboratory for AI-enabled autonomous warfare. As of early 2026, Ukrainian forces have deployed over five million drones. AI-enabled autonomous navigation has increased strike success rates from 10-20% to 70-80% by removing reliance on human control and vulnerable communications links.

Just this week, Ukraine announced it is sharing its real-world combat data—the most operationally rich trove in the world—with allied governments and defense companies to train AI models for autonomous systems. Ukrainian Defense Minister Mykhailo Fedorov framed the stakes directly: the future of warfare belongs to autonomous systems.

On the Russian side, the S-70 Okhotnik-B heavy stealth drone is being developed for autonomous deep-strike missions. Both countries have established dedicated unmanned systems commands. The escalation ladder is being rebuilt with AI at every rung.

In the Indo-Pacific, the Pentagon's Defense Autonomous Warfare Group is driven by intelligence assessments identifying 2027 as a potential timeline for Chinese action regarding Taiwan. The Replicator program's entire strategic rationale is to deploy autonomous swarms capable of overwhelming larger conventional forces. China, meanwhile, has its own military-civil fusion strategy for AI integration.

Israel has deployed Iron Beam, an autonomous laser defense system that engages incoming threats at speeds no human operator could match. The system's existence is justified defensively—but it embeds the principle that machines must make targeting decisions when human reaction times are insufficient.

....an Arms Control Association analysis described with precision: if both the weapons systems producing kinetic effects and the decision-support systems developing operational plans are autonomous, the risk of unintended escalation increases dramatically

Each of these developments is presented as defensive or deterrent. Each is rational within its own strategic context. And each contributes to a cumulative dynamic that an Arms Control Association analysis described with precision: if both the weapons systems producing kinetic effects and the decision-support systems developing operational plans are autonomous, the risk of unintended escalation increases dramatically. An autonomous system that misidentifies a reconnaissance drone as a strike platform, or accidentally damages a nuclear delivery system during heightened alert, could trigger a cascade that no human has time to stop.

The battlefield singularity is not a metaphor. It is an engineering program.

The Moral Trap of Probabilistic Certainty

Here is where the essay's title question becomes a genuine trap—and where the reader should feel the ground shift.

If an AI system predicts an imminent attack with 85% confidence, does a leader have a moral duty to strike first?

The intuition says yes. If you can prevent an attack that will kill your citizens, how can you justify inaction? The logic of protection demands preemption. This is the same logic that has justified preventive war throughout history, from the Six-Day War to the invasion of Iraq.

But the question contains a structural deception. It presents 85% as a fact about the world. It is not. It is a fact about a model. And models are built on assumptions, trained on historical data, and subject to the same errors, biases, and blind spots as the institutions that design them.

Consider what 85% confidence means operationally. If the system evaluates 100 potential threats and flags each at 85% confidence, 15 of those predictions are wrong. At the scale of modern military operations—where systems like Lavender can generate tens of thousands of targets—a 15% error rate produces catastrophic outcomes. And unlike human error, which is irregular and contextual, algorithmic error is systematic. It is wrong in the same way, at scale, every time.

The deeper problem is that confidence scores create an illusion of precision that maps poorly onto the actual uncertainty of geopolitical decision-making. Whether a country is about to attack depends on leadership intentions, domestic politics, alliance dynamics, internal deliberations, and contingencies that no dataset fully captures. An AI system that processes satellite imagery of troop movements, logistics patterns, and communications intercepts may be excellent at pattern recognition. It cannot read the mind of a decision-maker who has not yet decided.

Yet the existence of a number—85%—changes the institutional dynamics. A leader who acts on 85% confidence and is right is vindicated. A leader who ignores 85% confidence and is wrong is culpable. The asymmetry of blame pushes institutions toward preemption regardless of whether the prediction is sound. The AI system does not just inform the decision. It shifts the moral burden.

What 156 Nations Understood

In November 2025, the UN General Assembly's First Committee passed a historic resolution calling for negotiations on a legally binding agreement on lethal autonomous weapons by the Seventh Review Conference in 2026.

The 2026 Review Conference is increasingly described as a "finish line" for global diplomacy on autonomous weapons.

The vote was overwhelming: 156 nations in favor.

Only five voted against—including the United States and Russia.

The gap between those two numbers tells the story. The vast majority of the world's nations recognize that delegating lethal decisions to autonomous systems creates risks that existing international humanitarian law cannot adequately address. The countries that disagree are precisely those with the most advanced AI weapons programs and the strongest incentives to maintain freedom of action.

The 2026 Review Conference is increasingly described as a "finish line" for global diplomacy on autonomous weapons. If a binding framework is not reached, the speed of military AI innovation—driven by the very powers blocking agreement—will likely make any future regulation obsolete before it takes effect. The pre-proliferation window is closing.

But the governance challenge is not only about autonomous weapons in the conventional sense—drones that select and engage targets without human input. It is about the broader integration of AI into strategic decision-making: threat assessment systems that recommend preemptive action, decision-support tools that shape how commanders perceive risk, predictive analytics that compress the space for deliberation.

No treaty currently addresses the scenario in which a machine tells a head of state that attack is 85% likely and the institutional incentives favor acting on that number. This is not a weapons system. It is an epistemological infrastructure—a system that shapes what counts as knowledge in the highest-stakes decisions a government can make.

Five Questions That Should Keep You Awake

If this essay has done its work, the reader should not arrive at a comfortable answer. The point is not resolution. It is the recognition that existing categories—just war theory, international humanitarian law, doctrines of self-defense—were designed for a world in which humans made the predictions and bore the consequences. They are unprepared for a world in which machines make the predictions and institutions bear the consequences differently than individuals do.

- Who is accountable when a preemptive strike based on AI prediction turns out to be wrong? The commander who approved it? The analyst who reviewed the output for 20 seconds? The engineer who designed the model? The institution that set the confidence threshold? Accountability requires a locus. Algorithmic decision chains dissolve it.

- What happens to deterrence when both sides have predictive systems? If my AI tells me you are about to attack, and your AI tells you I am about to attack, and both systems are processing the same satellite data showing mutual mobilization, the result is not stability. It is a mirror trap in which each side's defensive posture confirms the other's threat assessment. The faster the systems operate, the less time exists to escape the loop.

- Can a democratic society authorize preemptive war on the basis of a probability score it cannot audit? Military AI systems are classified. Their training data is classified. Their error rates are classified. If a government strikes first on the basis of an AI prediction, no public can evaluate whether the prediction was sound. The democratic accountability that is supposed to govern the use of force becomes structurally impossible.

- Does the existence of predictive capability create a moral obligation to use it? This is the deepest trap. Once a state possesses a system that claims to predict attacks, choosing not to use it becomes a decision for which leaders can be blamed. The technology does not just enable preemption. It makes restraint politically expensive.

What would Stanislav Petrov's AI have done? It would have processed the data. It would have noted the probability. It would not have hesitated, because hesitation is not a feature. It would not have felt doubt, because doubt is not an output. It would have followed its training—and in 1983, that training would have been wrong.

The Restraint We Cannot Automate

The honest conclusion is that there may be cases in which AI-assisted threat prediction saves lives. There may be scenarios in which faster processing of intelligence data allows leaders to act in time to prevent genuine attacks. The technology is not inherently evil. The question is whether the institutions deploying it have the capacity for restraint that the technology itself does not possess.

Restraint is a human capacity. It requires the ability to hold contradictory information, to weigh costs that are not quantifiable, to choose inaction in the face of uncertain threat. It requires what Petrov had in that bunker: the willingness to trust judgment over data.

The trajectory of military AI development in 2025 and 2026—from the Replicator drones to Lavender's kill lists to Ukraine's autonomous swarms to the Pentagon's $14.2 billion investment—is moving in the opposite direction. Not because anyone has decided restraint is wrong, but because competitive pressure, institutional incentives, and the logic of preemption all push toward delegation to machines.

The question is not whether AI should assist military decision-making. That ship has sailed. The question is whether we build the institutional architectures—the oversight, the accountability, the deliberation time, the capacity to say no—before the speed of the systems makes those architectures impossible.

The clock is the confidence score. And it is ticking......